( A seven minute read)

I HAVE WRITTEN ON THIS SUBJECT IN PREVIOUS POST : IN WHICH I ADVOCATED THAT THERE IS A URGENT NEED TO GET A HANDLE ON WHAT I CALL COMMERCIAL ARTIFICIAL INTELLIGENCE.

ALL FORMS OF AI WHETHER THEY BE APPS OR PRODUCTS CONTAINING ALGORITHMS SHOULD BE VETTED BY AN INDEPENDENT WORLD ORGANIZATION TO ENSURE THEIR TRANSPARENCY AND ACCOUNTABILITY.

Like all threats in the world the threat that Artificial Intelligence poses to the world will only be recognised when it is too late.

WHY?

Because: We live in a world where there is very little left that is biennial.

We can rest assured that the world of technology will follow suite, creating more inequality than anything we have seen to date.

In the old days, you would need a rule set to say ‘if this happens, do that.

With AI there are no such mantra. It’s a free for all in sundry, irrelevant of any legal system or ethics.

Because: We are only beginning to scratch the surface with AI chatbots.

The sudden surge in interest in AI is closely linked to big data a more recent tech trend that has breathed fresh life into commercial AI development for profit.

General-purpose AI is still, at least for now, the domain of science fiction.

Real life AI software, tends to be much more purpose-driven and limited in its applicability. But that doesn’t mean businesses can’t see real value from more modest AI applications.

The market for AI applications is white-hot with huge potential, but that potential needs to be tempered by a heavy dose of realism about the capabilities and business value of artificial intelligence technology.

It’s sort of captured the imagination of the world in general, but the danger we have with AI is expectations getting too high.

What’s different this time is cheap storage, which has allowed companies to stash huge troves of data, a critical need for training machine learning algorithms — the “brains” behind artificial intelligence. Computing power has increased to the point where algorithms can churn through all this data nearly instantaneously.

Facebook announced this month that it would allow businesses to build chatbots using the AI engine in its Messenger app.

Microsoft made a similar announcement last month.

IBM has been one of the bigger players in the AI platform space ever since it made Watson available to developers.

So far developers have used it to build smarter travel planning assistants, shopping recommendation engines and health coaches.

Google, Facebook and other technology giants are racing to apply the technology to consumer products. All are placing serious bets on deep learning, neural networks and natural language processing.

The social media maven recently signaled its commitment to advancing these types of machine learning by hiring Yann LeCun, a well-regarded authority on deep learning and neural nets, to head up its new artificial intelligence (AI) lab.

Insurance companies are looking at applying it to the process of approving medical claims.

Retailers are applying it to customer service and marketing with enterprise technology companies like Salesforce looking to embed it in their software.

But even as businesses are finding real value in AI applications, there’s a widening pitfall.

Success breeds hype, which itself leads to inflated expectations. Should burgeoning AI software fail to live up to unrealistic expectations, it could brew disappointment and stain the technology.

In fact, artificial intelligence has come so far so fast in recent years, it will be pervasive in all new products by 2020.

So we are at a tipping point …

Artificial intelligence belongs to the frontier, not to the textbook.

Artificial intelligence is expected to be ubiquitous within just five years, as developers gain access to cognitive technologies through readily available algorithms.

Artificial intelligence chatbots aren’t the norm yet, but within the next five years, there’s a good chance the sales person emailing you won’t be a person at all.

All of this is proceeding without much scrutiny: So in this post I will perforce analyzed the matter from my own perspective; given my own conclusions and done my best to support them in limited space.

Let’s start with a useful definition of artificial intelligence.

The term “Artificial Intelligence” refers to a vastly greater space of possibilities than does the term “Homo sapiens.” When we talk about “AIs” we are really talking about minds-in-general, or optimization processes in general. It is the theory and development of computer systems able to perform tasks that normally require human intelligence.

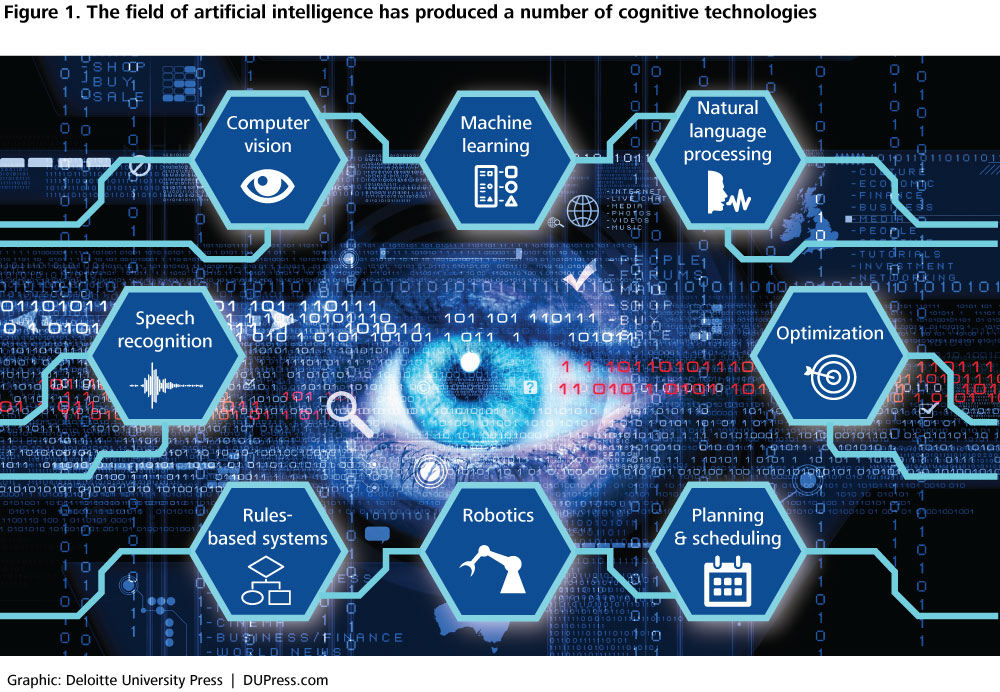

While cognitive technologies are products of the field of artificial intelligence.

They are able to perform tasks that only humans used to be able to do.

Organizations in every sector of the economy are already using cognitive technologies in diverse business functions.

If current trends in performance and commercialization continue, we can expect the applications of cognitive technologies to broaden and adoption to grow.

Billions of investment dollars have flowed to hundreds of companies building products based on machine learning, natural language processing, computer vision, or robotics suggests that many new applications are on their way to market.

We also see ample opportunity for organizations to take advantage of cognitive technologies to automate business processes and enhance their products and services.

If you look at technology we have to-day you could say that it is the knack of so arranging the world that we don’t have to experience it.

We must execute the creation of Artificial Intelligence as the exact application of an exact art.

And maybe then we can win.

I suspect that, pragmatically speaking, our alternatives boil down to becoming smarter or becoming extinct.

Historians will look back and describe the present world as an awkward in between stage of adolescence, when humankind was smart enough to create tremendous problems for itself, but not quite smart enough to solve them.

We are for the moment subject to natural selection which isn’t friendly, nor does it hate you, nor will it leave you alone.

The point about underestimating the potential impact of Artificial Intelligence is symmetrical around potential good impacts and potential bad impacts.

When something is universal enough in our everyday lives, we take it for granted to the point of forgetting it exists.

It may be tempting to ignore Artificial Intelligence because,of all the global risks but we do so AT GRAVE RISK OF CREATING A DIGITAL DIVIDE WORLD.

We cannot query our own brains for answers about nonhuman optimization processes— whether bug-eyed monsters, natural selection, or Artificial Intelligences.

How then may we proceed?

How can we predict what Artificial Intelligences will do?

The human species came into existence through natural selection, which operates through the non chance retention of chance mutations.

Artificial Intelligence comes about through a similar accretion of working algorithms, with the researchers having no deep understanding of how the combined system works. Nonetheless they believe the AI will be friendly,with no strong visualization of the exact processes involved in producing friendly behavior, or any detailed understanding of what they mean by friendliness.

Friendly AI is an impossibility, because any sufficiently powerful AI will be able to modify its own source code to break any constraints placed upon it.

This does not imply the AI has the motive to change its own motives.

Sufficiently tall skyscrapers don’t potentially start doing their own engineering.

Humanity did not rise to prominence on Earth by holding its breath longer than other species.

Humans evolved to model other humans—to compete against and cooperate with our own conspecifics.

Robots will not.

It’s mistaken belief that an AI will be friendly which implies an obvious path to global catastrophe.

Artificial Intelligence is not an amazing shiny expensive gadget to advertise in the latest tech magazines.

Artificial Intelligence does not belong in the same graph that shows progress in medicine, manufacturing, and energy.

Artificial Intelligence is not something you can casually mix into a lumpen futuristic scenario of skyscrapers and flying cars and nanotechnologies red blood cells that let you hold your breath for eight hours.

A sufficiently powerful Artificial Intelligence could overwhelm any human resistance and wipe out humanity. (And the AI would decide to do so.)

Therefore we should not build AI.

On the other hand.

A sufficiently powerful AI could develop new medical technologies capable of saving millions of human lives. (And the AI would decide to do so.)

Therefore we should build AI.

Once computers become cheap enough, the vast majority of jobs will be performable by Artificial Intelligence more easily than by humans.

A sufficiently powerful AI would even be better than us at math, engineering, music, art, and all the other jobs we consider meaningful. (And the AI will decide to perform those jobs.) Thus after the invention of AI, humans will have nothing to do, and we’ll starve or watch television.

So should we prefer that nanotechnology precede the development of AI, or that AI precede the development of nanotechnology?

As presented, this is something of a trick question.

The answer has little to do with the intrinsic difficulty of nanotechnology as an existential risk, or the intrinsic difficulty of AI. So far as ordering is concerned, the question we should ask is, “Does AI help us deal with nanotechnology? Does nanotechnology help us deal with AI?”

The danger of confusing general intelligence with Artificial Intelligence is that it leads to tremendously underestimating the potential impact of Artificial Intelligence.

The best way I can think of to train computers to be able to get them watch a lot of videos and observe what they Predict.

Prediction is the essence of intelligence.

All scientific ignorance is hallowed by ancientness.

Here is a closing thought.

When a Super Intelligent Robot returns to earth from a voyage in space how can it be trusted to tell us the truth.

Exactly how AI systems should be integrated together is still up for debate.

With every advance, and particularly with the advances in machine learning and deep learning more recently,we get more tools to fuck up the world we all live on.

Ours is a less than excessively age.

We know so much and feel so little.

All comments welcome, all like clicks chucked in the bin.